Over the last year, Sahi has steadily undergone enhancements to speed up its proxy.

For outgoing connections, we moved away from raw sockets and started using java’s Http URLConnection primarily for its proxy tunneling capabilities, but it helped in boosting performance over using raw sockets due to better socket reuse and buffering.

Caching was allowed for static files so that browsers could just use files from their own cache, instead of fetching from the server.

But there was still one big problem with Sahi’s proxy. Let me explain:

Opening a connection to a server or a proxy is expensive for the browser. In a simple case, a browser will open one connection per request and then close it down when it has read the response. But since it is an expensive process, the HTTP protocol allows something called keep-alive or persistent connections. What this means is that a browser can open a connection, send its request, read the response, then again send the next request using the same connection. This helps in reusing connections and can vastly improve browser performance.

So, how does the browser know that a response is complete before it sends the next request? It knows because, the server first sends the length of the content that the browser is supposed to read, via the Content-Length header. Once the browser has read that many bytes, the browser will assume the response is complete. It can then use the connection for the next request.

Browsers do one more thing to improve performance. Even before the full content is read, the browser starts to render partial data. This means that if there is a script or css file included in the html page, these included files will start to get fetched (through different connections) while the page is still rendering.

But this is not the case when using Sahi as its proxy. Sahi modifies the content slightly so the content length changes. And since it is not known what the eventual content length would be, Sahi first reads the full response from the server, modifies the response, recalculates the content-length, and then sends the new content-length to the browser followed by the modified content. This means that while the response is coming in slowly from the server, the proxy is still buffering it, so the browser cannot start rendering partial content or fetch embedded content. (Note that the communication time from the proxy to the browser is negligible compared to proxy-web server communication since the proxy is either on the same machine as the browser or on the LAN.)

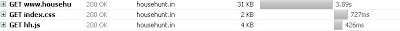

Have a look at how Firefox behaves with and without proxy. Both are keep-alive connections and both have the content-length header set correctly.

Without Proxy: Notice how the css and js files are being fetched before the first response has been fully read.

With Proxy: Notice how the css and js files are being fetched only AFTER the first response has been fully read.

So how can we solve this? HTTP allows one other mechanism. This is called a chunked transfer, which can be activated via the header Transfer-Encoding: chunked. What this means is, you no longer need to send the content length of the whole response. You can break down the response into chunks and you send the content-length of a single chunk, then its data, then the content-length of the next chunk followed by its data etc. You signal the end of the response by an empty chunk of content-length 0.

This is how Firefox behaves when using Transfer-Encoding: chunked. This is with the proxy on.

With Proxy: Notice how the css and js files are being fetched along with the first response.

Does this mean that all Sahi had to do was change the headers? No.Working on a whole string is much easier than working on a stream of data.

For example if we wanted to change all instances of “blue” to “red”, it would be easy to work on “It is a blue blue sky”. It would not be the same to work on three substrings of the same string like “It is a bl”, “ue blu”, “e sky”. You can see that none of them individually have “blue” in them. A solution in this particular case, would be to keep the last word somewhere, concatenate it with the next string, and then try substitutions.

Second, and more significantly, you cannot just chain data coming in from an inputstream from the web-server into an outputstream pointing to the browser. Why? Because, both network reads and writes via java.io are blocking calls in Java and such a read and write in a single thread can cause a dead-lock. What that essentially means is we need to have a common buffer where data is written to and read from, but via two different threads. This is solved well using PipedInputStreams and PipedOutputStreams (which will be a separate blog post).

After a few days of work, Sahi now has a fully functional, much faster streaming proxy, with filters on the streams doing all the data and header modifications. The changes should be available in the next build.